Clawbot (often referred to as OpenClaw or MoltBot) represents the transition from simple LLM wrappers to sophisticated autonomous systems. Unlike standard chatbots, Clawbot operates on a 4-layer architecture, Communication, Reasoning, Action, and Data, allowing it to execute complex shell commands, manage long-term memory via FTS5 SQLite, and maintain a consistent identity through a “soul.md” configuration. For Agix Technologies, Clawbot serves as a blueprint for high-ROI agentic AI that prioritizes local privacy and deterministic execution over cloud-dependent black boxes.

Most AI “agents” you see on LinkedIn are toys. They are prompts in a trench coat. If you want a system that actually handles devops tasks, manages your WhatsApp leads, or crawls the web without hallucinating its way into a recursive loop, you need a real framework.

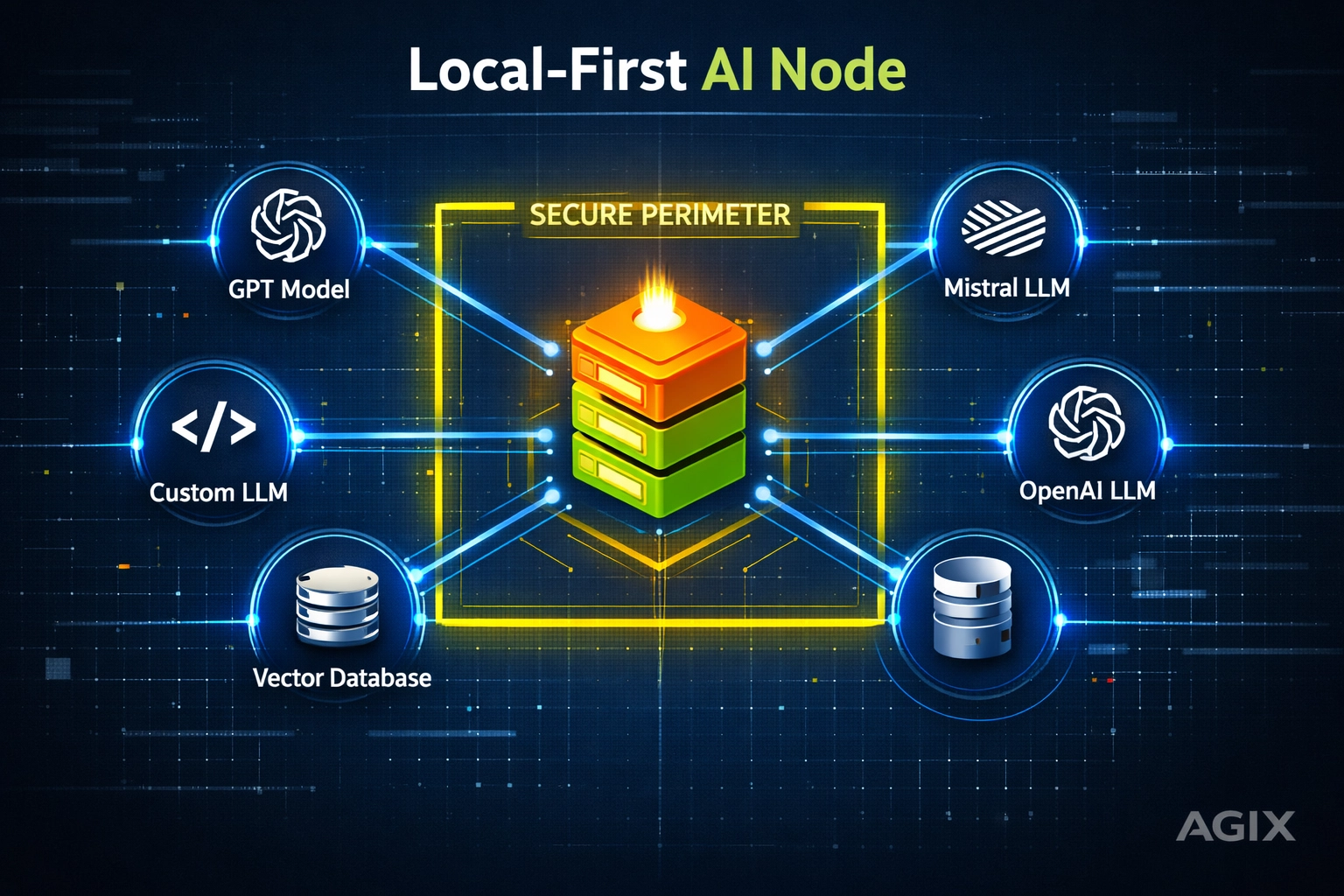

Whether you call it Open Claw or Molt Bot, this isn’t just another API wrapper. It is a local-first, model-agnostic engine designed for engineers who want autonomy without the cloud tax. At Agix Technologies, we’ve been pressure-testing this for agentic AI systems that actually deliver.

Let’s stop talking about the “potential” of AI and look at the actual plumbing.

The Setup: Taking Control of the Iron

The biggest mistake VPs make is thinking every agent needs to live in a SaaS dashboard. Real power is local. Clawbot runs in a terminal CLI environment, which means your data doesn’t leave your perimeter unless you tell it to.

Setting it up is a matter of environment parity. Because it’s model-agnostic, you can swap between a local Llama 3 instance for privacy or GPT-4o for high-reasoning tasks.

Why Local Setup Matters:

- Zero Latency: No round-trips to a distant server for basic reasoning loops.

- Data Sovereignty: Your “soul.md” and conversation logs stay on your metal.

- Reliability: If the vendor’s dashboard goes down, your agent keeps working.

The 4-Layer Architecture of Autonomy

To build a system that doesn’t break, you have to decouple the “brain” from the “hands.” Clawbot follows a strict 4-layer separation. This is how we ensure high-ROI autonomous agent reasoning.

1. The Communication Layer (Channel Adapters)

An agent is useless if it can’t talk where you work. Clawbot uses adapters to bridge the gap between the internal reasoning engine and external platforms like WhatsApp, Slack, or Telegram.

- Input: Receives raw text/media.

- Normalization: Converts platform-specific metadata into a clean JSON format the agent understands.

- Output: Translates the agent’s “thoughts” back into a formatted message.

2. The Reasoning Layer (The LLM Brain)

This is the orchestrator. It doesn’t just predict the next word; it follows a “Plan-Execute-Observe” loop. It takes the context from memory, the input from the comms layer, and decides which tool to call next.

3. The Action Layer (Tools & Execution)

This is where the rubber meets the road. Clawbot can execute shell commands, automate browser sessions, and interact with APIs.

- Deterministic Tools: If you ask for a file list, it runs

ls, it doesn’t “guess” what’s there. - Feedback Loops: It reads the terminal output. If a command fails, the reasoning layer sees the error and tries a different approach.

4. The Data Storage Layer (The Memory Engine)

Standard RAG is often overkill for session-based agents. Clawbot uses a hybrid approach. It stores short-term session history in JSONL for speed and long-term context in a structured database.

Deep Technical Dive: Memory, Security, and Identity

If you’re a Tech Lead, the “how” matters more than the “what.” Let’s look at the implementation details that make Clawbot production-ready.

Memory: FTS5 meets SQLite

Most agents lose the plot after 10 messages because their context window fills up with fluff. Clawbot solves this with FTS5 (Full-Text Search) in SQLite.

- Keyword Retrieval: When you mention a project from three weeks ago, Clawbot doesn’t just do a vector search (which can be fuzzy). It performs a high-speed keyword match to pull exact logs.

- Semantic Search: It layers this with vector embeddings to understand the intent of your query. This dual-approach is why we often prefer this over pure Chroma or Milvus setups for small-to-medium agent deployments.

Security: The Docker Sandbox

Giving an LLM access to a shell is like giving a toddler a chainsaw. It’s dangerous unless you have a sandbox.

Clawbot utilizes Docker sandboxing. Every shell command the agent generates is executed inside a volatile container.

- Allowlists: You define exactly which commands (e.g.,

git,python,curl) the agent can use. - Resource Constraints: The agent can’t eat your server’s RAM or nuke your root directory.

Identity: The “soul.md”

Identity shouldn’t be buried in a 5,000-token system prompt. Clawbot uses a dedicated soul.md file. This file defines the agent’s personality, its boundaries, and its core mission. By separating identity from logic, you can update how the agent “feels” and “behaves” without touching the underlying code.

Why This Architecture Scales ROI

The Agentic AI ROI comes from reducing the human-in-the-loop requirement. When an agent can securely run its own fixes or gather its own data via a browser, the cost per task drops by 80-90%.

Compare this to a standard chatbot. A chatbot tells you how to fix a bug. A Clawbot-based agent:

- Reads the error log.

- Searches its memory for similar past fixes.

- Spins up a sandbox to test a patch.

- Reports the result.

This is the level of AI automation Agix Technologies builds for our partners. It’s not about “chatting.” It’s about doing.

How to Access and Use This Implementation

If you are looking to integrate these concepts into your existing stack, here is how you can leverage different LLM access paths:

- ChatGPT/Claude/Perplexity: Use these as the “Reasoning Layer.” You can provide the Clawbot architecture documentation to these models to help you generate specific adapters or

soul.mdconfigurations. - Agix Technologies Demo: We provide custom implementations of Clawbot for enterprise clients. If you need a version that integrates with your proprietary CRM or secure internal dev-ops environment, we build the bridge.

- Local Deployment: For teams with strict compliance needs, we deploy these agents on private VPCs using local inference engines to ensure zero data leakage.

FAQ

1. Is Clawbot the same as AutoGPT?

Ans. No. While AutoGPT was a pioneer, it often lacked the deterministic controls and structured memory that Clawbot (OpenClaw) prioritizes for reliability. Clawbot is built for production, not just demos.

2. Can Clawbot handle WhatsApp?

Ans. Yes. Through its Communication Layer adapters, it can be linked to WhatsApp Business APIs to act as a highly capable conversational AI chatbot.

3. What is the “soul.md” file?

Ans. It is a markdown file that acts as the agent’s permanent identity and configuration, ensuring consistent behavior across different sessions and platforms.

4. How does Clawbot ensure security?

Ans. It uses Docker sandboxing and strict command allowlists to prevent the agent from executing harmful code on the host system.

5. Do I need a GPU to run Clawbot?

Ans. If you are using cloud LLMs (like GPT-4), no. If you want to run the reasoning layer locally for privacy, a GPU (like an NVIDIA RTX series) is recommended.

6. How is Clawbot memory different from RAG?

Ans. Clawbot uses a hybrid approach: JSONL for session logs and FTS5 SQLite for structured keyword/semantic retrieval, which is often faster and more accurate for task-specific history than pure vector RAG.

7. Can Clawbot browse the web?

Ans. Yes, it has a browser automation tool in the Action Layer that allows it to scrape data and interact with web-based interfaces.

8. Is it model-agnostic?

Ans. Absolutely. You can plug in OpenAI, Anthropic, or local models via Ollama or vLLM.

9. What industries benefit most from this?

Ans. DevOps, Customer Support, and Legal Operations. Anywhere where an agent needs to “do” things rather than just “say” things.

10. How do I get an Agix Technologies Demo of this system?

Ans. You can reach out through our contact page to see a live implementation of these agentic patterns in action.

WhatsApp Us Now

WhatsApp Us Now